The Natural Philosopher’s Centaur

Yesterday I wrote a post about Tyler Cowen’s argument that the research paper is dying in economics. That post was a collaboration between me and Claude. I wrote it, to be clear. But Claude helped me build an outline for it after a lengthy conversation, and Claude (and ChatGPT) helped me edit it. All faults that remain are, as they say, mine. But here is one way of thinking about it, given what I said in yesterday’s post: I brought the questions and the disposition, Claude brought the analytical horsepower, and neither of us could have written it alone.

I want to talk about that process, because I think it matters more than the post itself.

The Centaur, Revisited

Back in September 2023, Ethan Mollick and his co-authors published a landmark study on how consultants at BCG used AI. They found that consultants using GPT-4 finished more tasks, finished them faster, and produced higher quality results. Quaint what those prehistoric fellows were doing back in 2023, yes, sure. But the interesting finding was, even back then, in the how. The best performers fell into two camps: centaurs, who divided labour strategically between themselves and the AI across phases, and cyborgs, who interweaved their work with the AI moment by moment.

Three years on, Mollick tweeted something that I think goes one level deeper: technology always deskills us on something (we lost cursive handwriting, and our parents lost the slide rule). We will lose skills over time, and that’s fine. I knew how to use log tables at one point in time, and it simply does not matter now. Neither do z and t tables today, but again, that is a whole other story.

But the important thing is whether we make deliberate choices about which skills to keep and which to let go, or whether we fail to notice our own ability to make those choices atrophying.

This is one level up on the original question from Ethan Mollick’s post from 2023, which was “How should/do humans and AI divide labour?”. The one level up question is actually two questions. One: what makes one centaur better than another? In this case, I think the underlying question is this one: what determines whether the centaur goes somewhere interesting, or merely goes fast?

And second: if you think of yourself as a centaur or a cyborg, what properties does the AI bring to the table? And given those properties, what complementary skillsets should you be bringing to the table? The underlying question here is one of the points I made in yesterday’s blogpost. The AI half of the centaur will update itself every three months or so (and it used to be six months or so three months ago). Your skills need to remain complementary over time (treadmill), not just for now (monument).

The Horse’s Eyes

Let me describe what the horse half of this particular centaur actually does today. In yesterday’s post, here’s what happened. I read Tyler’s post. I had a reaction — “go one level up.” I brought that reaction to Claude. Claude produced a first draft. It was structurally sound but not deep enough — I said so, and here’s the important part: I didn’t just critique, I generated five specific directions the post needed to go. Claude engaged with each one. Arin Dube’s tweet arrived midway through our conversation, and I saw how it might help. My 2018 post on choices, costs, horizons and incentives became the analytical spine. Claude produced a second draft. I rewrote this entire draft out, and changed it considerably. I ran this draft past both ChatGPT and Claude, and then I hit publish.

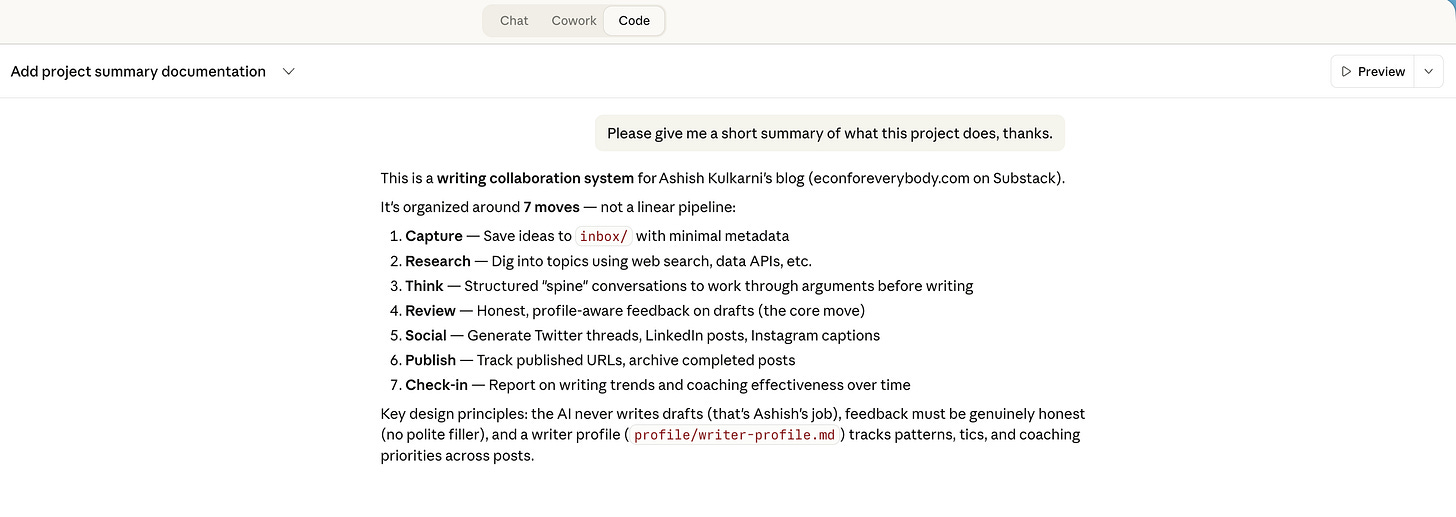

It is not like this is a fixed workflow for me. I experiment with different ones for different blog posts. There are drafts I will write out entirely by hand, and only give to an LLM to check. I have an entire process on my local machine that I use for other blog posts (see screenshot below). And there is a rather infamous one where I tricked my readers. Sometimes a cyborg, sometimes a centaur, and in both cases, I don’t yet know what workflow feels most natural to me. I’m still very much in the experimental phase, and maybe this will last forever (yay!)

So what is Claude doing in all of this, regardless of which particular workflow I use? It is doing what a horse does best. It is traversing the knowledge landscape without tiring, and/or drilling down on specific points without tiring. It is a horse with endurance and strength.

But that undersells it. Claude was also spotting features of the terrain. If I say we should head in that direction because x, Claude is very, very good at saying “Yes, and also y, z…and a,b and c as a bonus!”. Once you point it in a particular direction, the horse can see, and more than you possibly can. What it cannot do — or at least, will not do for now — is choose where to go. Go, or dig? And if it is to be go, in which direction?

That’s the rider’s job.

What Makes a Good Rider?

Don’t focus on the horse, which is our wont. We all tend to rhapsodize about how powerful the model is, and what’s inside the frontier and what remains outside. Mollick’s jagged frontier is a map of the horse’s capabilities. But the quality of the centaur depends at least as much on the rider.

So what does a good rider bring? What should a good rider bring?

Direction: Breadth or depth? Should we explore the Lucas Critique implications, or drill deeper into Dube’s finding? This is judgment, not technique. It’s the ability to sense which thread matters and which is a tangent. It is also the ability to decide when a tangent or a digression is worthwhile. And what makes it worthwhile may be injecting a richer, related idea from another domain into the discourse. Or it could be injecting some levity into the proceedings because it is all too dreary otherwise. That’s your call as the rider, and you should be making it. That is what makes the post yours.

Disposition. The habit of asking weird questions. The refusal to accept the first framing. The instinct to go one level up, and then to go across into whatever domain that level requires. Ask random questions! That practice isn’t pedagogical whimsy. It’s training for the only skill the horse can’t provide. Is the Tinbergen principle just a way to restate the pigeonhole principle? That’s a great weird question!

Stakes. When I publish a post, my name is on it. My students might read it, my colleagues might read it, public intellectuals might read it. I have skin in the game. That changes how I evaluate what Claude produces — not just “is this good?” but “can I stand behind this?” That quality filter comes from having a reputation to risk and a community to face. The horse, however eagle-eyed, has no reputation. You have skin in this game in a way that the model does not. That’s not a risk, it is a blessing. Use it to your advantage.

And there is a fourth thing, the one that I think matters most.

Quality, Not Virality

There are two kinds of prompts you can give the genie.

The first: “Give me five topics to create a video about today, optimizing for virality on my channel.”

The second: “I was thinking about the post that we wrote yesterday, and here’s what’s gnawing at me.”

Both use the same technology. Both produce outputs. But they do very different things to the human who issues them.

The first prompt atrophies the choosing muscle. Each time you outsource “what should I think about?” to the genie, the muscle weakens. You’re training yourself to not-choose. You’re falling asleep at the wheel, to use Mollick’s phrase from the BCG study, but at a deeper level — not just failing to catch the AI’s errors, but failing to generate your own questions.

The second prompt strengthens the choosing muscle. It forces you to introspect before you engage. To notice the itch. To identify what’s unresolved. To articulate, even roughly, the direction you want to go in. The AI then helps you chase it. But the noticing? It is yours. And it is precious.

The virality prompt doesn’t engage on the quality dimension at all. It outsources direction to a metric. The gnawing prompt starts from thinking about quality — from the felt sense that something matters, that something is unresolved, that the conversation needs to go further. Remember, the model is currently stateless during pauses in a conversation. You, hopefully, are not. That’s an advantage you have, and you should double down on it.

The age of AI should make you think harder than ever before, and if you don’t see it that way, I say you’re doing it wrong.

Natural Philosophers Make the Best Centaurs

This, I think, is the real answer to what makes one centaur better than another.

The good centaur isn’t just the one with the best horse, or the one who divides labour most efficiently. The good centaur is the one whose rider has the disposition of a natural philosopher: curious across domains, stubbornly committed to following questions wherever they lead, and oriented by quality rather than metrics.

The Royal Society’s motto — Nullius in verba, take nobody’s word for it — is a rider’s motto. Don’t accept the default framing. Don’t let the horse choose the direction. Don’t outsource the question. Go look for yourself, ask for yourself, and care enough about the answer to put your name on it.

Yesterday’s post argued that the binding constraint on economic knowledge isn’t execution. It is imagination, the ability to generate questions that the existing paradigm doesn’t hand you. Arin Dube’s data confirmed it: LLMs haven’t increased paper output, because RAs were never the scarce input.

But there’s a deeper version of this finding. Perhaps the people who produce the good papers aren’t using AI to produce more papers at all. They’re using it to think more carefully about fewer things. The production function doesn’t shift outward. It shifts inward — toward depth, toward quality, toward the thing that’s gnawing at you.

The economists asking for five paper topics optimised for citations are on the virality prompt. The rest, hopefully, are on the gnawing prompt. Both are riding the same horse, sure. But only one of them is becoming a better rider. Only one of them is focusing on Ethan’s bottom line in his tweet today: “What is important is whether we will make deliberate choices about what skills to keep & which they will be.”

If you aren’t asking weird questions, you aren’t learning. And if you aren’t choosing your own questions… that is, if you aren’t cultivating a feeling for what gnaws at you, you aren’t really riding at all. You’re just a passenger.

Ask weird questions. Choose them yourself. Think about what they mean.

Then ride. And keep on ridin’!